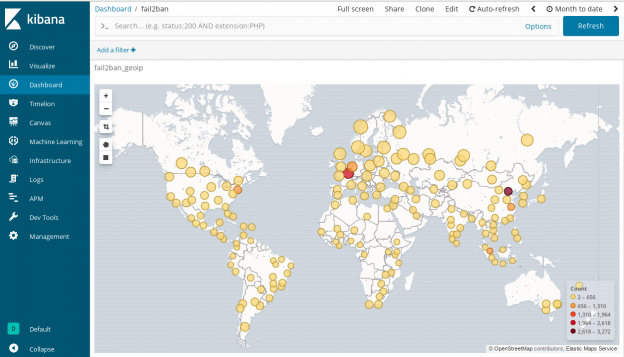

In the last post I wrote about how you can create a central syslog server. This time I will show you how you can use syslog-ng to parse fail2ban log messages, enrich it with GeoIP metadata and send into Elasticsearch. You can even visualizing Fail2ban logs in Kibana to see where the failed login attempts are coming from.

Update: This post has been reviewed and all Fail2ban and GeoIP related contents have been merged here from the previous post. Look no further, you will find everything you need here. Note that this guide requires Elasticsearch 7.x.

Continue reading Visualizing Fail2ban logs in Kibana

I know that I created the issue at the first place. However I could fix it and I will show you how I did it and how can I avoid that in the future.

I know that I created the issue at the first place. However I could fix it and I will show you how I did it and how can I avoid that in the future.